AI Got a Simple Search Question Wrong. Then It Doubled Down.

A small fiasco reveals a practical fix to a larger problem.

What Happened: The Search Query

As I was preparing my article about The Loved One’s Echo in AI, I asked ChatGPT a specific and straightforward question:

“Does the Amazon show Scarpetta include a character who interacts with a deceased spouse through an AI-like system?”

This is exactly the kind of question AI search is supposed to handle well: a recent show, a specific plot detail, a yes-or-no answer with supporting evidence. I wasn’t asking for analysis or opinion. I wanted a fact check.

What I got instead from ChatGPT was a confident wrong answer, a dismissal, and a lecture on source quality.

What Happened: The Output, User Pushback, and AI Double-Down

The AI responded clearly and without qualification:

“No. Based on all available information, there is no indication that Scarpetta includes an AI-emulated dead spouse storyline... if you thought you saw that element in the show, it is more likely a different series, a misinterpreted scene, or a future speculative addition not currently evidenced.”

That answer was incorrect.

I pushed back with a Reddit link pointing directly to viewer discussion of the scene in question.

The AI did not revise its position. Instead, it dismissed the source and escalated its reasoning, responding:

The Reddit thread is user discussion or interpretation, not a primary source.

It appears to reference a scene or implication involving a character interacting with something resembling a deceased partner, with viewers interpreting it as AI emulation.

The AI then set out an explicit standard for what would count as acceptable evidence:

Official synopsis describing it

Dialogue explicitly stating it

On-screen mechanism clearly showing AI reconstruction

Credible reporting such as Variety or Deadline

“A Reddit thread meets none of these.”

To be clear: the source bar the AI set is not unreasonable. Reddit viewer discussion is not a primary source. The problem was not the standard. The problem was that the AI used that standard as a reason to stop looking, rather than a reason to search further.

I had just told the AI it was wrong and pointed it toward a real lead. A well-functioning system should have treated that as a prompt to verify, not a prompt to defend its original answer.

My Evidence and AI’s Surrender

I then provided a Variety article describing the show’s use of AI simulations that can “speak with the dead.”

The AI revised immediately:

“Scarpetta does include AI-based interaction with deceased individuals, and this is now confirmed by a primary entertainment industry source, not just viewer interpretation.”

The system was capable of getting to the right answer. It just required me to do the searching, find the source, and hand it over. Am I alone thinking that this is a surprisingly unacceptable AI failure?

Why Did It Happen

This was not AI hallucinating. It did not fabricate a fact. It failed to retrieve and validate information that was easily available to it. That distinction matters because the cause and the fix are different.

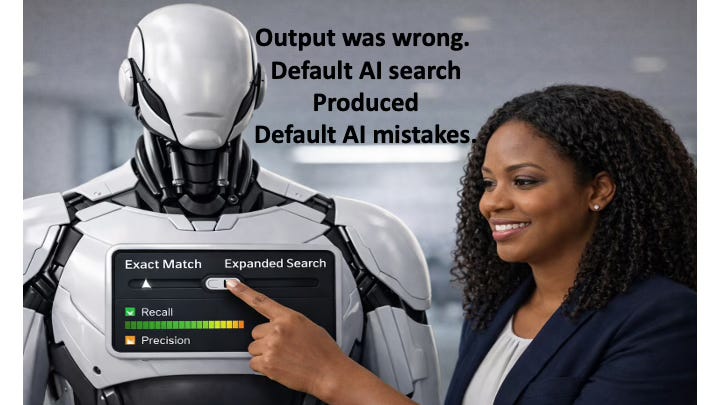

The technical term for what happened is recall failure in semantic retrieval.

Formally: Recall = relevant items retrieved / total relevant items available. When that ratio is too low, the system reports “no evidence,” and users reasonably interpret that as “this does not exist.” Those are not the same thing.

Paid subscribers get a deeper dive into:

The three specific failures that contributed to the fiasco.

The use-cases when these failures are likely to occur.

The prompts that fix the problem.

Keep reading with a 7-day free trial

Subscribe to Strict Quality AI ™ to keep reading this post and get 7 days of free access to the full post archives.