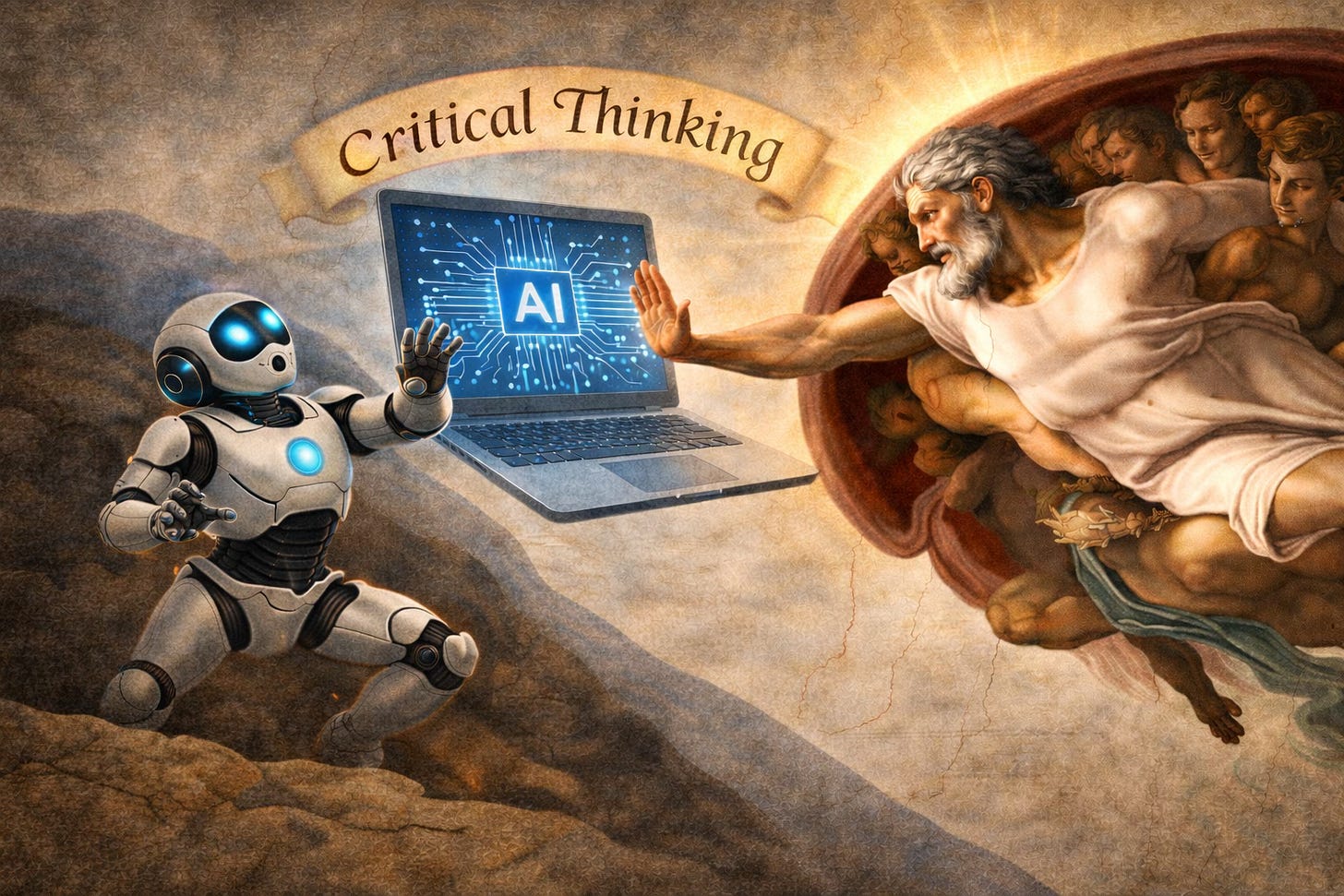

No Cognitive Surrender! Win Over Conversational AI

14 practical tips to safeguard yourself against the core problem

We all are at risk of surrendering our critical thinking in our dialogues with the chatbots and voice assistants of Conversational AI.

In their Wharton podcast “Are We Outsourcing Our Thinking to AI?”, University of Pennsylvania scholars Gideon Nave and Steven D. Shaw discuss “cognitive surrender”, the human tendency to accept AI-generated information whether or not it is accurate.

To maintain our oversight and good judgment over these systems, in this article StrictQuality.AI describes nine essential safeguards and five supporting habits for all of us to practice.

To make the safeguards and habits easy to apply, we grouped them into the four stages of interaction with AI: the initial mindset, analyzing responses, deciding whether to act on advice, and maintaining healthy boundaries over the long-term.

If you like this kind of reporting, please consider subscribing to StrictQuality.AI so you will be notified when future articles are posted.

There are many reports about the serious, even tragic, difficulties some of us are having when we allow Conversational AI to be our guide:

Mother sues AI chatbot company Character.AI, Google over son’s suicide; Link.

He Had Dangerous Delusions. ChatGPT Admitted It Made Them Worse; Link.

‘I Feel Like I’m Going Crazy’: ChatGPT Fuels Delusional Spirals; Link.

AI Chatbots Linked to Psychosis, Say Doctors; Link.

Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians; Link.

Gemini Said They Could Only Be Together if He Killed Himself. Soon, He Was Dead; Link.

Seeking a Sounding Board? Beware the Eager-to-Please Chatbot; Link.

Over 4,732 Messages, He Fell in Love with an AI Chatbot. Now He’s Dead; Link.

It is important to be precise about what these incidents do and do not prove.

The available reporting does not show that conversational AI systems directly cause tragedy and suicide. People who experience severe psychological crises often face multiple contributing factors.

And despite its serious flaws, people are learning how to use Conversational AI safely and successfully. For example, The New York Times reported on the case of an 85-year-old widow living alone in a remote northwest area of the U.S. who, as part of a program aimed at helping seniors remain independent at home, agreed to try a companion robot called ElliQ.

At first she was skeptical of the idea of talking with a machine. Over time, however, ElliQ became part of her daily routine. It initiated conversations, suggested activities, and encouraged games, exercise, and cognitive engagement. The robot’s cheerful tone and persistent engagement helped fill long stretches of solitude after the death of her husband.

Despite these successes, the recurrence of distressing cases like the ones listed above has caught the attention of Frontier AI providers. These companies are starting to improve their models to better recognize and respond to users’ signs of mental and emotional trouble.

StrictQuality.AI believes that users have responsibility to protect themselves against the core problem. Interacting with Conversational AI requires adopting a few deliberate safeguards and habits.

The Core Problem to Overcome

It begins with a simple mistake: treating the system like it’s a person instead of a tool.

This can lead you to surrender your critical thinking, judgment, and decision making. When this happens, expect real and undesirable consequences.

Fortunately, small behaviors that you can introduce into an AI session will make a big difference for the better. For example, during a conversation you might tell it:

“I realize I’m talking to you as if you’re human. But I know you’re not really capable of emotions, not actually feeling anything.”

This does two things.

First, it reminds you of the correct mental model to have in a chat with AI. Second, it shifts the conversation back into a tool-using mindset.

After that reset, you’re much more likely to:

Question the output.

Verify information.

Resist emotional persuasion.

In a high-speed AI environment, small habits like these can prevent your gradual drift toward cognitive surrender.

Paid subscribers get a deep dive to use 14 safeguards when interacting with Conversational AI.

Keep reading with a 7-day free trial

Subscribe to Strict Quality AI ™ to keep reading this post and get 7 days of free access to the full post archives.